Find the sum of n terms of the series `log a + log (a^2/b) + log (a^3/b^2) + log(a^4/b^3)...` - YouTube

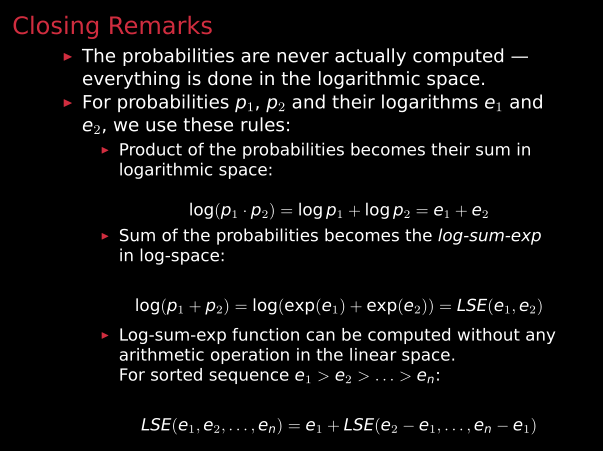

Underflow/overflow from improper log, then sum, then exp · Issue #5 · lanl-ansi/inverse_ising · GitHub

JavaScript function add(1)(2)(3)(4) to achieve infinite accumulation-step by step principle analysis - DEV Community

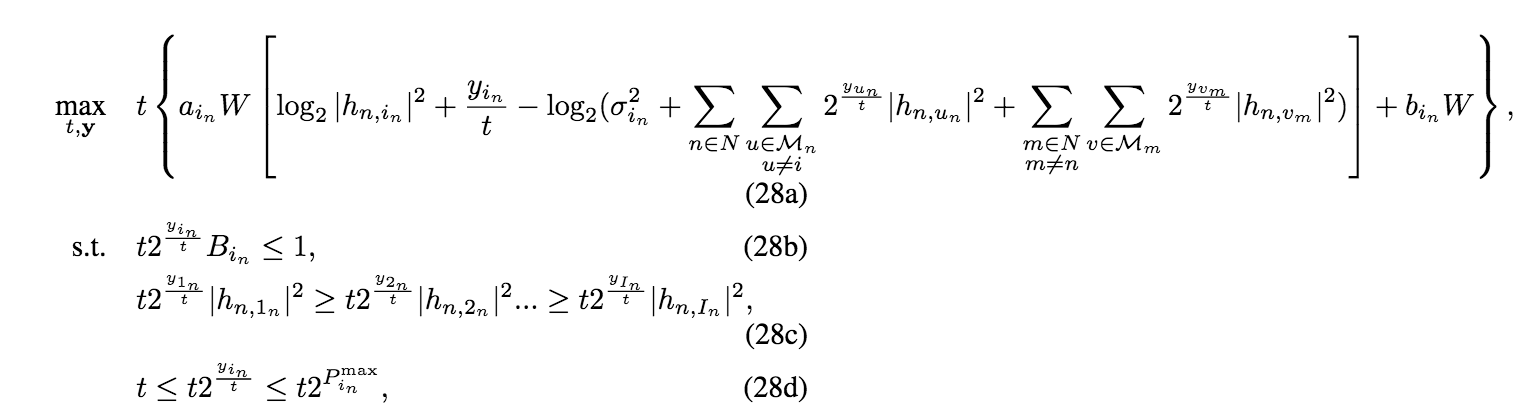

Convert the perspective function of log-sum-exp to cvx - CVX Forum: a community-driven support forum

Bound to the log-sum-exp function There is a relatively simple way to bound the log-sum-exp by a quadratic function. An upper bound was known for the binary case since 1996. It was due to Jordan and Jaakkola in the context of variational inference for ...